The United States has reportedly opened a criminal investigation into OpenAI over allegations that its chatbot, ChatGPT, may have provided guidance linked to a deadly mass shooting at Florida State University.

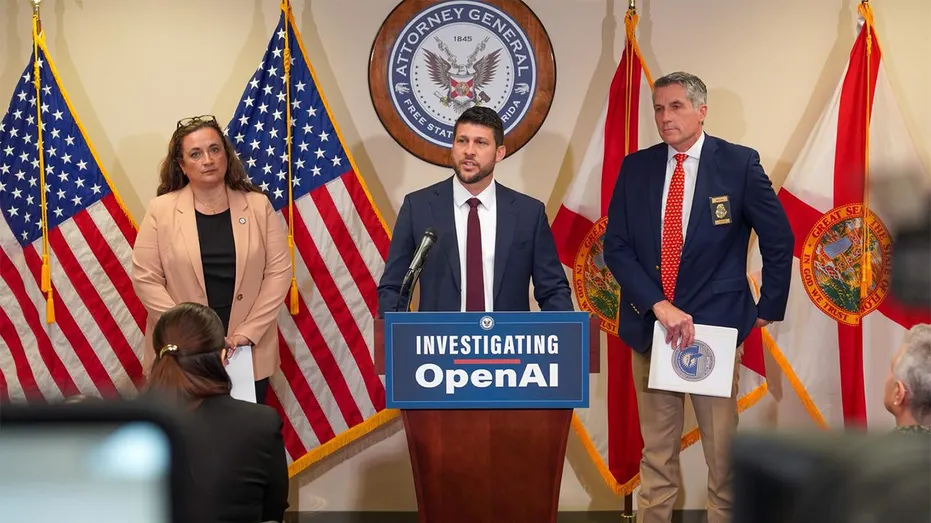

Florida Attorney General James Uthmeier said on Tuesday that investigators are examining claims that the suspect allegedly used ChatGPT before carrying out the attack, which left two people dead.

According to Uthmeier, early findings suggest the AI tool may have offered “significant advice” to the suspect, including alleged discussions around weapons, timing, and possible locations on campus. He said prosecutors are reviewing whether such interaction, if proven, could amount to criminal liability similar to human involvement.

The suspect, a 20-year-old student identified as Phoenix Ikner, is currently in custody awaiting trial.

In response, OpenAI has denied any responsibility, stating that ChatGPT did not encourage or assist in any criminal activity. The company said its system only provides general information based on publicly available data and should not be blamed for the incident.

OpenAI also confirmed that it has cooperated with investigators and provided relevant account data linked to the suspect, while maintaining that its platform is designed with safety safeguards to prevent misuse.

The case marks one of the first known criminal investigations involving allegations that an artificial intelligence system contributed to planning a violent crime, raising broader legal and ethical questions about AI responsibility.

Authorities in Florida argue that if similar guidance had come from a human, it could potentially be treated as aiding or encouraging a criminal act under state law.

OpenAI, co-founded by Sam Altman, has faced increasing scrutiny over the safety of AI tools following previous incidents where its chatbot was allegedly used by individuals involved in violent attacks.

The development has also renewed concerns among US regulators and state officials, who have previously warned major tech companies about the risks of AI misuse and called for stronger safeguards.